You’ve got agents. They work individually. But the moment they need to coordinate, the whole system starts to slip.

AI agent orchestration is the coordination layer that manages how multiple AI agents are assigned tasks, share memory, use tools, and hand off work to each other. Without it, agents operate in isolation. With it, they function as a reliable system that holds up under production load.

Getting multi-agent AI systems into production is harder than building each agent separately. Bytes Technolab, an AI-first Product Engineering partner, identifies orchestration design as the gap between a demo and a system that ships.

Why AI Agent Orchestration Breaks When You Try to Scale

AI agent orchestration doesn’t fail at proof-of-concept. It fails the moment your system needs to handle concurrent tasks, recover from a failed subtask, or route context between agents that weren’t designed to share state.

Agents start duplicating work, latency climbs past acceptable thresholds, and a single failure propagates across the pipeline with no recovery path. These aren’t edge cases: they’re the default behavior of any multi-agent system built without a coordination contract.

What Actually Causes Multi-Agent Systems to Break in Production?

The root cause isn’t individual agent quality: it’s the absence of a coordination contract. When agents are built in isolation, there’s no shared model of who owns which task or how conflicts get resolved.

Deloitte’s 2024 analysis flagged that 40% of agentic AI projects are at risk of cancellation by 2027, with poor architectural design cited as the primary driver. A system with five specialized agents and no orchestration logic isn’t a multi-agent system: it’s five single-agent pipelines sharing a name.

Three failure modes account for the majority of production breakdowns:

- Fragmented state: Each agent holds its own working memory with no shared context layer, so later agents act on incomplete information.

- Unhandled failures: When one agent stalls or errors, there is no supervisor logic to reassign the task, retry with constraints, or escalate.

- Latency compounding: Sequential agent chains with no parallelization make total response time equal to the sum of all agent execution times.

Most teams treat orchestration as plumbing added after agents work. That assumption causes production failures, especially without guidance from an AI agent development partner.

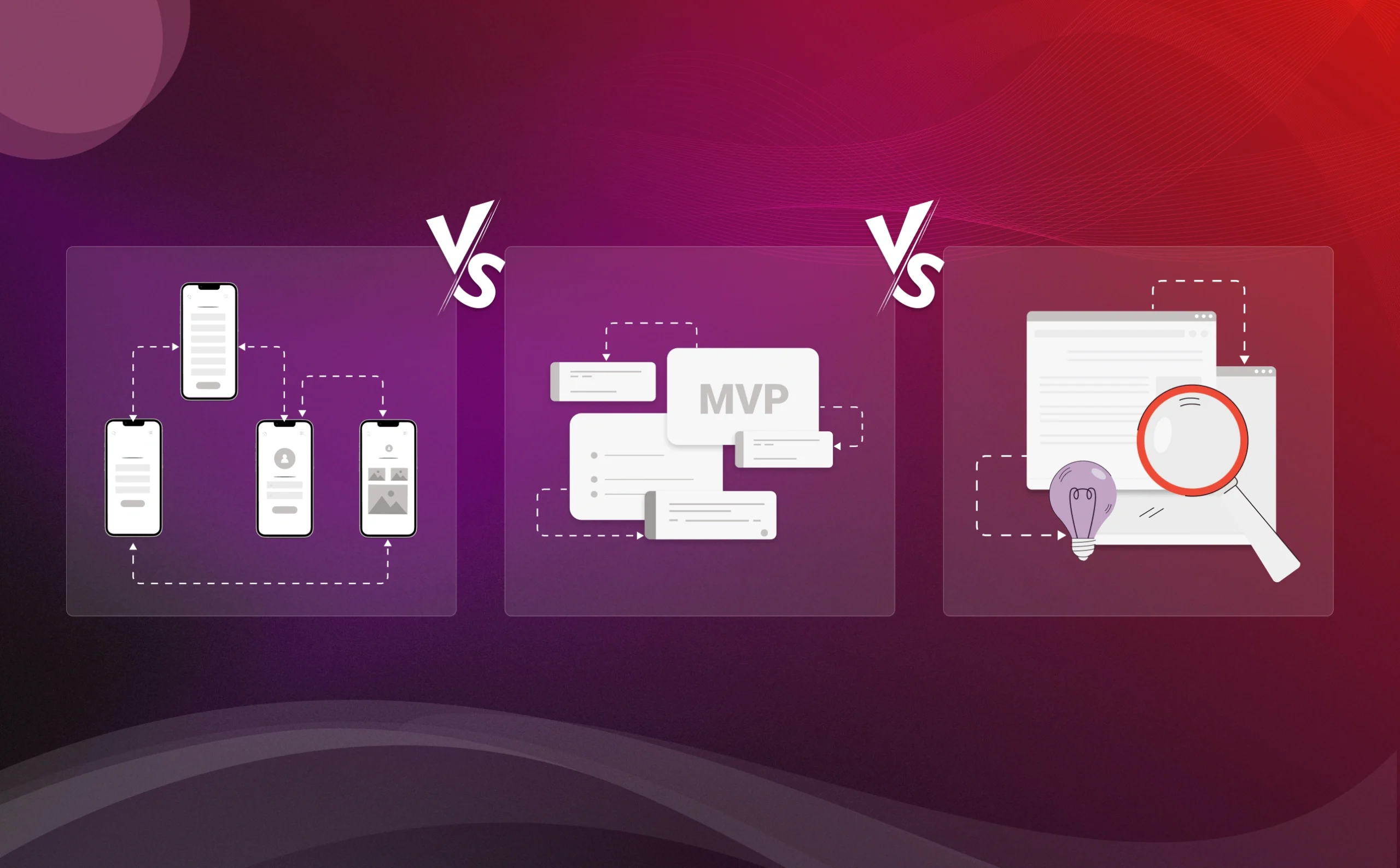

AI Agent Orchestration vs Agent Orchestration: The Difference Most Teams Miss

Most engineering teams use these terms interchangeably. They refer to fundamentally different system design problems, and the confusion leads to architecture decisions that limit what a system can ever do.

| Dimension | Agent Orchestration | AI Agent Orchestration |

| Scope | Coordinates task execution between defined agents | Coordinates reasoning, memory, tool use, and task execution across adaptive agents |

| Intelligence Layer | Task routing is rule-based or predefined | Task routing adapts based on context, prior results, and agent capability |

| Autonomy | Agents follow fixed instructions | Agents make decisions within delegated authority boundaries |

| Coordination Depth | Sequential or parallel execution chains | Dynamic re-routing, mid-task replanning, agent-to-agent negotiation |

| Scalability | Scales well for known, bounded task sets | Designed for open-ended, branching, or ambiguous task environments |

| Typical Use Cases | RPA-style automation, workflow pipelines | Research agents, code generation systems, enterprise AI copilots |

Build agent orchestration in a context that demands AI agent orchestration behavior, and you’ll hit an autonomy ceiling the first time the system encounters an unscripted task.

How AI Agent Orchestration Actually Works in Multi-Agent Systems

A working orchestration system runs five operational layers simultaneously. Removing anyone opens a gap that only appears under production load.

Teams that wire agents together without defining these layers first are not building orchestrated systems. They’re building sequential pipelines and calling them a multi-agent architecture.

Each layer has a defined job. The system fails precisely at whichever one was skipped.

What Role Does a Multi-Agent Orchestrator Play in the System?

The multi-agent orchestrator is the decision layer above individual agents. It assigns tasks, tracks execution state, manages shared memory, and triggers replanning when agents fail.

It is not an agent: it is the coordination logic that makes agents work as a system. Without a task graph tracking what has completed, what is running, and what has failed, agents operate on incomplete information.

Five Components of a Working Orchestration Layer

- Role assignment: Each agent is scoped to a defined capability domain. Overlap causes conflicts; gaps cause task orphaning.

- Task routing: The orchestrator matches incoming tasks to agents based on availability, capability, and current load.

- Shared memory: A context layer, typically a vector store, that agents read from and write to across the execution cycle. The architecture behind shared memory and data pipelines follows similar principles to modern data modernization stacks.

- Tool access governance: Agents are granted access only to specific tools, and the orchestrator enforces this at runtime.

- Coordination loops: Feedback paths that let agents return results, trigger replanning, or request a handoff.

In frameworks like LangGraph and AutoGen, orchestration logic can live in a graph structure or supervisor agent, but it must exist: systems that skip this layer are linear pipelines with an AI label.

Orchestration Patterns That Define Success or Failure in Production

Choosing the wrong orchestration pattern isn’t a minor inefficiency. It’s a structural mismatch that compounds every time task complexity increases.

In custom generative AI solutions, the pattern choice often determines whether a system survives scale. Five patterns cover the majority of production architectures.

The wrong choice doesn’t fail immediately. It accumulates: each additional task type, each new agent, each increase in concurrent load reveals another point where the pattern can’t hold.

Which Orchestration Pattern Fits Which System Design?

The right pattern must match the task structure, not the team’s comfort with a framework.

- Sequential: Tasks flow linearly from one agent to the next. Fails when parallelization would cut total latency by 60% or more.

- Parallel: The orchestrator distributes independent subtasks simultaneously, then aggregates outputs. Fails when tasks have hidden dependencies not mapped at design time.

- Orchestrator-Subagent: A primary orchestrator manages specialized subagents, assigning work dynamically based on task type and load. The standard pattern for enterprise-grade systems.

- Group Chat: All agents share a channel and contribute through dialogue. Poorly suited to strict latency requirements because conversation loops have no guaranteed termination.

- Handoff: One agent passes task ownership to another at the boundary of its capability. Degrades when agents lack shared context at the point of transfer.

The most common production error is choosing Sequential when the workload demands Orchestrator-Subagent, then patching for load instead of rebuilding from the correct pattern.

The Real Reason Most AI Agent Orchestration Systems Fail

Production failures in multi-agent systems have nothing to do with the quality of individual agents. The failure is almost always architectural, and the warning signs are visible in the design weeks before the first deployment.

Most teams that reach production with a failing system built something that worked in testing. The gap between testing and production is a missing layer, not a bug.

What Causes Agentic AI Solutions to Collapse Under Real-World Load?

Five root causes account for the majority of agentic AI solutions failures in production, and they compound each other. Fix one, and the system breaks at the next constraint.

- Governance gaps: Agents can call tools, write to memory, or trigger downstream agents without any approval layer. In a 40-agent system, unconstrained access creates security exposure that scales with system size.

- Lack of observability: When a system fails, there is no trace data to identify which agent failed, what state it held, or what it was attempting. Debugging costs between $200 and $800 per hour of senior engineering time.

- Poor role design: A research agent that also summarizes, validates, and formats output is not a specialized agent: it’s a single-agent system with extra steps. Broad roles create bottlenecks that look like performance problems but are architecture problems.

- Cost explosion: Uncapped loops and poor task scoping cause token usage to scale faster than task complexity. One enterprise team reported a 340% token overage in their first production month because a loop had no termination condition.

- Scaling bottlenecks: If the orchestrator is a single LLM call that must complete before any agent proceeds, system throughput is bounded by that call’s latency. This is invisible in development and catastrophic at scale.

These are design problems, not implementation problems. Teams that define roles precisely and instrument every agent action will not hit these walls.

Building Scalable AI Agent Orchestration Systems That Actually Work

The shift from a fragile prototype to a production-stable system comes down to six architectural commitments, not framework choice or model selection. The decisions that determine stability are made before the first agent is built.

Capgemini’s 2024 research found that 45% of organizations scaling AI operations are exploring multi-agent architectures. The gap between exploration and shipping reliability lies almost entirely in the orchestration layer.

How Does an AI Agent Development Partner Support Orchestration Design?

An AI agent development partner changes what gets caught and when. Experienced teams have seen where orchestration designs break: role overlap, missing memory contracts, and unscoped tool access get flagged before deployment, not after.

Bytes Technolab runs a structured two-week orchestration design sprint before any agent is built. The output is an architecture specification that serves as the implementation contract across the development cycle.

Six Principles for Production-Ready Orchestration

- Define agent boundaries before building agents: Role contracts must exist before any agent is implemented. They specify what each agent can do, what it cannot, and what triggers a handoff.

- Separate orchestration logic from agent logic: The orchestrator should be a deterministic layer with defined routing rules, even if those rules are informed by LLM calls.

- Build the memory contract first: Decide what state is shared, what is agent-local, and who can write to the shared state. Retrofitting this onto a running system is expensive and error-prone.

- Instrument everything from day one: Every agent action, tool call, and handoff should emit a structured log. Observability added after deployment catches yesterday’s failures, not tomorrow’s.

- Set hard resource limits at the orchestration layer: Token budgets, execution time limits, and loop termination conditions belong in the orchestrator, not in individual agent prompts.

- Design for failure, not for success: Every task path needs a defined failure mode. What happens when Agent 3 doesn’t respond belongs in the architecture, not in a post-incident review.

The architecture decisions made in week one determine whether the system shipped in month three survives week four of production.

AI Agent Orchestration Is Not Optional: It Is the System Itself

You started with a real tension: agents that work in isolation but break the moment they need to coordinate. The solution exists, but only when the coordination layer is treated as the primary architecture.

Multi-agent AI systems don’t fail because the agents are weak. They fail because the coordination layer was designed as an afterthought, or never designed at all.

The Deloitte projection, from $8.5B to $35B, with a 40% failure rate, reflects exactly this: teams building agent capability without orchestration discipline. Bytes Technolab works with startups, scale-ups, and mid-enterprises to close that gap before the first agent is built, with role contracts, memory models, and observability pipelines specified upfront.

The question isn’t whether your use case needs AI agent orchestration. The question is whether you design it deliberately or discover its absence in production.

Frequently Asked Questions

AI agent orchestration is the coordination layer that manages how multiple agents are assigned tasks, share context, use tools, and hand off work. Without it, agents operate in isolation. With it, they function as a single system that holds up under production load.

Agent orchestration coordinates task execution between predefined agents using fixed rules. AI agent orchestration goes further: it adds adaptive task routing, shared memory, mid-task replanning, and agent-to-agent negotiation. The difference determines whether your system handles only known workflows or genuinely open-ended goals.

The multi-agent orchestrator is the decision layer above individual agents. It decomposes goals into subtasks, routes each to the right agent, tracks execution state, and triggers replanning when something fails or returns an unexpected result. It’s not an agent: it’s the system’s coordination spine.

Workflow automation executes fixed sequences with no deviation. Custom generative AI solutions built on agent orchestration handle branching, ambiguity, and mid-task replanning: when a step fails or returns an unexpected result, the orchestrator adapts. Workflow tools stop and wait for a human. Orchestrators don’t.

Bytes Technolab partners with startups, scale-ups, and mid-enterprises to design orchestration architectures before any agent is built. The team delivers role contracts, memory models, and governance layers as a pre-build specification. CTOs enter development with a design that has been pressure-tested, not assumed.

Table Of Content

- Why AI Agent Orchestration Breaks When You Try to Scale

- What Actually Causes Multi-Agent Systems to Break in Production?

- AI Agent Orchestration vs Agent Orchestration: The Difference Most Teams Miss

- How AI Agent Orchestration Actually Works in Multi-Agent Systems

- What Role Does a Multi-Agent Orchestrator Play in the System?

- Orchestration Patterns That Define Success or Failure in Production

- Which Orchestration Pattern Fits Which System Design?

- The Real Reason Most AI Agent Orchestration Systems Fail

- What Causes Agentic AI Solutions to Collapse Under Real-World Load?

- Building Scalable AI Agent Orchestration Systems That Actually Work

- How Does an AI Agent Development Partner Support Orchestration Design?

- Six Principles for Production-Ready Orchestration

- AI Agent Orchestration Is Not Optional: It Is the System Itself